What is the Future of Music?

This is certainly not the easiest question - but one that deserves speculation nonetheless.

Since music is such an integral part of our daily lives, to ask “what is the future of music?” is really to ask a question about ourselves, and how music will continue to shape how we live.

The future of music will most likely follow the same trends we are seeing in modern technology. It will be incredibly social similar to social media, it will become increasingly computer-based and A.I. driven, and lastly, it will serve as a window to the past, held open by any musicians continuing to create music in more traditional ways.

Social media allows for the nearly instant sharing of new music

Let’s examine 3 different possibilities for the future of music. Keep in mind that these are of course speculative and in no way absolute.

What might be helpful first, is to briefly take a look at what music is, the intention behind music, and how it relates to technology.

The Beginning of Art and Where it’s Going

The first known painting dates back roughly 40,000 years. It marked the first time we saw our world and wished to interpret it, and then represent it in a new way.

Art has followed that same method ever since. It has always been a way to take our surroundings, and create something that aligned with our perspective.

It truly doesn’t matter what medium, be it painting, sculpting, dancing, photography, film - the intention is the same.

We take our immediate sensation of the world, internalize it, and then manifest it into something new and uniquely human.

What changes during this process is the technology with which we create art, and the way we interpret our surroundings.

As the technology changes, and as we use past art and creations to interpret our world, the art changes and takes new and exciting forms.

How does this relate to music?

Music, being an art form, and sharing that same intention, follows this pattern. Although its purpose or intention remains the same, how it presents itself changes.

For example, imagine trying to create Queen’s operatic masterpiece Bohemian Rhapsody on a phonograph. Would it have been possible? Definitely not. The technology could not have supported what was needed to create it.

Furthermore, could Freddie Mercury have written it without being previously exposed to and inspired by opera? In truth, no he couldn’t have.

With that said, how we express ourselves through art, and the art we create is entirely and irrevocably tangled in both the current state of available technology and past art.

All this to say, that music will almost certainly follow the path of emerging technology and build upon the cultural identities it and other art forms have previously created.

This leads us to the question, what current technology is shaping how we create music?

Music for the Sake of Social Media and Online Communication

Over the past two decades, there has been a shift in how we communicate. Messages that once took personal interaction to convey, can now be made almost instantly, and across almost any distance.

We are the first generation that can communicate anytime, anywhere, without the restraint associated with an immobile communicative device.

For artists, this means that people no longer need to pack into a gallery to see your work. Or huddle around a radio to listen to your music. They can listen anywhere, and anytime, with devices that are becoming increasingly intertwined with their daily lives.

The audience is immediate, and the boundaries for finding and distributing art has been drastically decreased.

For example, let’s look at the process of distributing a song.

40 years ago, to distribute a song, an artist needed a record deal. This was due to the overwhelming expense of manufacturing and shipping a vinyl record across the country.

If an artist wanted an international audience, this expense would increase exponentially.

Contrast that with the distribution process of today. For a fraction of the cost (a very small fraction) an album or song can be almost instantly distributed world-wide.

And this expense is only incurred if you’re not considering the free distribution that comes with services like YouTube, or SoundCloud.

With this increased ease of releasing music, and the barrier-to-entry no longer present, more people will decide to create and release their music.

(In fact, this has already happened. The complete saturation of the musical landscape can be observed simply by scrolling through the new releases section on any music based website.)

As our communication becomes increasingly influenced and dependent on technology, and releasing music becomes easier, many people will use music as a means not to create a career - but as a social endeavor, or form of communication.

Furthermore, music as a social endeavor will increase as the means of making music becomes easier.

Perhaps this sounds far-fetched, but consider the music-making process today.

With an hour of your time, you could download a sample, loop it, sing or rap over it, add effects using presets, export it as an MP3, and send it either directly to your friends, or indirectly using social media.

The end result may not be perfect, but it’s still an option for anyone with a computer.

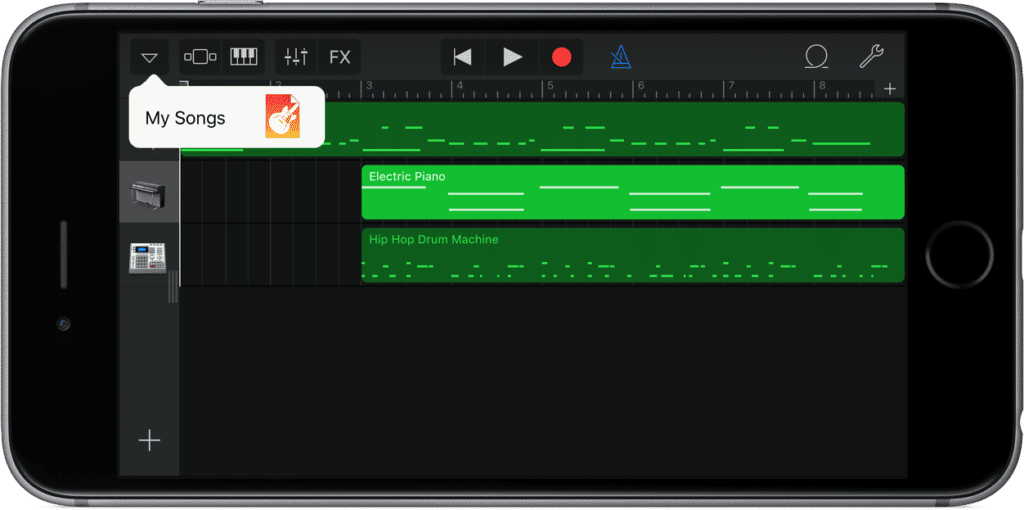

Now imagine the capabilities of a cell phone 20 years from now. It has a better designed, better sounding microphone and an ability to process data faster and in larger quantities.

On this phone is an application that can create customizable or algorithmically generated loops. It can process effects and offers surprisingly good sounding presets that use predefined measurements to balance the sound.

With this application, the user is able to record their voice, quickly produce a song, and export it to a social media platform or to a larger online distributor like Spotify.

If this sounds strange or unlikely, think again. Today, this technology is already available to every smartphone user. Does it sound perfect? No, but it offers the possibility to easily create music.

As this technology improves, and engineering music becomes easier and more immediate, the barriers-to-entry will become virtually non-existent. When this happens, making music will become a social endeavor available to almost everyone.

There’s no way to tell what this means for artistry - if music will become better or worse overall. But we can say with almost certainty, music creation will become possible for more people.

As a result, we can expect music to continue on its current decentralized path. It is no longer a select few that hold the means of creating and releasing music - it will soon become an option for anyone.

Music Driven by AI and Algorithm Technology

AI, or artificial intelligence, has been part of popular discussion since the 1980s, but only recently has it started to look like an actual possibility.

In short, A.I. is the ability of a computer to not only retain and regurgitate certain information, but to evolve, learn, and create new ways of interpreting and reciting information.

The best comparison for this technology is the brain - a mechanism that can not only learn about its environment, but can learn about itself, and in turn begin to learn in new ways.

This is all getting a bit complex, and of course, we won’t delve too deeply into it here. There are certainly those who are more equipped to discuss this topic.

But before we delve deeper, here are some quick definitions to clarify:

An Algorithm is a set of rules, executed by a computer.

An A.I. includes algorithms but also implements general reasoning, and self-correction or adaption mechanisms.

Although A.I. is still in its initial stages, once it becomes fully developed, there is no telling how capable a technology it will become.

To illustrate this, imagine if you had a machine that could analyze every Beatles’ song. It could take note of the melody, the structure, the timbre of the instrumentation - even the more nuanced details like the recording’s total harmonic distortion and perceived loudness.

It could then cross-reference this information with people’s reception to each song, via metrics like listening time, or if the song was “favorited” or “saved.” This could even be cross-referenced with the listener’s region, other interests, or whatever aspect of those individuals it deemed relevant or somehow related. All of this information could be used to determine the most likable and influential aspects of the Beatles.

Now let’s say this machine would be capable of generating all the instrumentation needed to recreate and reimagine these songs. It could access a library of similar instrumentation, or perhaps more unorthodox instrumentation and vocal stylings, and combine these sound samples in the style of Paul McCartney’s and John Lennon’s songwriting (and maybe even Ringo’s).

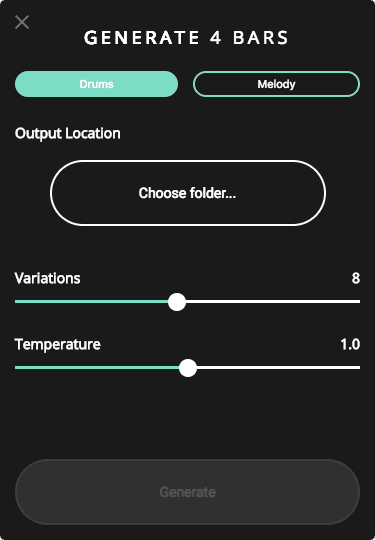

Google's music AI already allows for some of these functions

With all of this ability, it could create a song, that had completely unique sonic characteristics, but still personified everything we love about the Beatles.

If this seems unbelievable, this isn’t too far from modern technology’s current abilities.

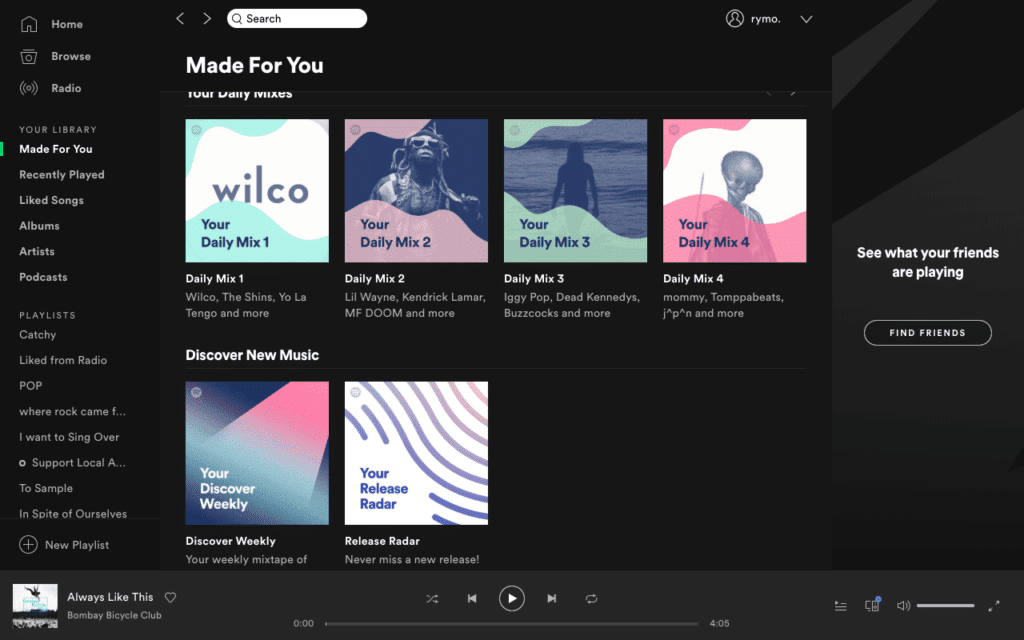

We already know that Spotify is recording user metrics. We know this because we receive “Discover Weekly” playlists that relate our online behavior to similar behavior of other users.

We also know that this data is currently used to find “gaps in the marketplace,” where the desire of listeners is not being fully met. It does so by using metrics similar to those mentioned in the previous Beatles example, and then attempts to fill those market gaps with new or similar artists.

With this recorded data and the patterns that emerge from it, it will eventually become possible for Spotify or any company with access to this information to input it into an A.I. based machine.

Again, this technology is not too far away from reality.

Currently, a multitude of A.I. based music generators is in development. The more notable are Google’s Magenta and Sony’s Flow Machines. These machines can generate music, composed entirely from artificial intelligence and source materials.

You can currently download Google's Magenta AI for free.

The more information and user metrics these machines have to work with, and the more efficient they become in creating, observing the audience’s response, and responding with new and improved content, the more A.I. will become a dominant force in popular music creation.

Although I’ll refrain from making a judgment on this possibility, it may be cause for concern for artists everywhere.

Music as a Window to the Past:

Like with most emerging technologies, there will be those that reject it. Although many listeners and artists will follow along the path that technology is laying in front of them, many will attempt to divert for the sake of being individualistic or upholding a certain artistic standard.

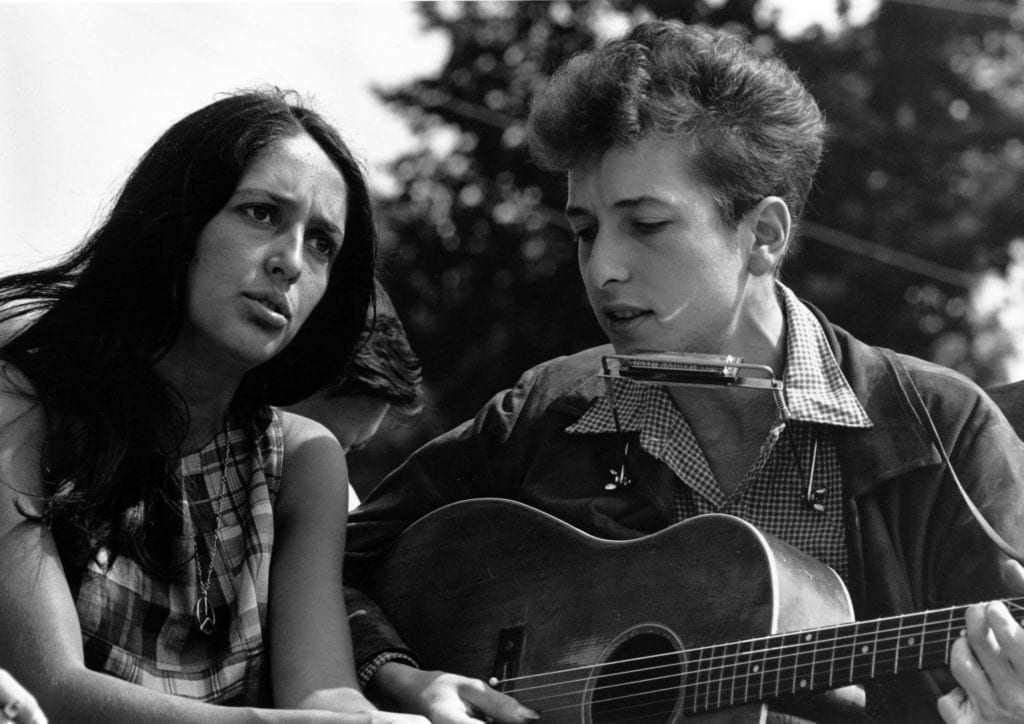

A good example of how this could happen would be the Folk resurgence that occurred in the U.S. during the 1960s and 70s.

As the electric guitar become the norm for rock groups, and multitrack recording became a more prominent recording technique, it seemed that the future for music would follow along the electric based, heavily produced, full band path.

Just about every popular artist was adopting the loud electric sound, until Bob Dylan and other Folk revivalists popularized the simplistic acoustic guitar, and harmonica combination.

This diversion rebranded and reimagined a style of music thought to be lost. For many, it defined their times, while allowing for a retrospection made possible by its classic instrumentation style.

A similar resurgence would likely happen during the popularization of A.I. or communication-based music. This resurgence would offer a new perspective while relying heavily on nostalgia of and appreciation for the music that existed decades prior.

There may come a day where an acoustic set will be seen as antiquated. Maybe even a DJ or EDM set, with a real person behind the turntables will be seen as a thing of the past.

It’s difficult to say what will fall to the wayside as new technologies take over the music-making process. One thing that is for certain, is that there will always be people that yearn for the past, and wish to see it recreated during their lifetime.

With this said, musicians and artists alike will hold the responsibility of reminding future generations, what it was like when people, not machines, made music.

Conclusion:

Again, answering the question “What is the Future of Music” is no easy task. No one can know for certain, what will occur and what will be created.

But when we observe the close tie between music and technology, and how the emergence of new technology shapes music and other forms of self-expression, things become clearer.

We can see that as music becomes easier to produce and distribute, more people will make and release music. Pair this with popular forms of communication and it’s easy to assume the two seemingly separate emerging technologies will intertwine. As a result, music can become a form of communication.

We can observe how the advancing technologies of user data analytics and A.I. driven computing will combine, to create songs that sound like those we know and love. Based on how these two things operate, it can be assumed that these A.I. generated songs will become better, and more popular with time.

And lastly, we can speculate that when these two things do occur, there will be a resurgence of music made in the classic or antiquated way. When this happens, music may be more about understanding who we were than looking toward the future.

we can speculate that when these two things do occur, there will be a resurgence of music made in the classic or antiquated way. When this happens, music may be more about understanding who we were than looking toward the future.

Whatever the case may be, the future of music holds exciting questions - ones for which we will have to wait to get an answer.