What is Music Mastering?

Quick Answer

Music Mastering is a step in music production in which an audio recording (typically a stereo music mix) is prepared for distribution. How a mix is prepared for distribution during music mastering depends on the genre of the music, the intended platform it will be distributed on, and other factors.

What is Music Mastering in Detail

Up until recently, music mastering was considered an enigma. Maybe artists and even engineers didn’t exactly know what it entailed and how it was performed.

Today, music mastering more commonly understood, as information and even full tutorials have been made available on YouTube, and across the internet in general.

With that said, there’s still a lot to learn about mastering, how its performed, and some of the variables that affect it.

There is still a lot to learn about music mastering

We’ll primarily consider how the music itself affects the mastering process, and how the goal of a mastering engineer changes based on the music being mastered.

Additionally, we’ll look at a brief synopsis of music mastering, including what forms of processing are used, and why they’re used. These include:

- Equalization

- Compression

- Stereo-Imaging

- Harmonic Distortion

- Limiting

From there we’ll delve deeper into how the genre of music affects mastering as we briefly cover mastering for:

- Pop Music

- Rap or Hip-Hop Music

- Rock Music

- Metal Music

- Jazz Music

Finally, we’ll discuss how music mastering changes based on what medium the music will be distributed. We’ll briefly cover mastering music for these mediums:

- Digital Streaming

- Vinyl

- Cassette

Hopefully, the information provided can both introduce you to music mastering, as well as cover some of its rarely discussed, yet just as important details.

If you have a mix that you’ve been working on, and you’re ready to hear it professionally mastered, send it to us here:

We’ll master it for you and send you a free mastered sample for you to review.

What Plugins or Processing are Used During Music Mastering?

During music mastering, many forms of processing are used - these include but aren’t limited to equalization, compression, stereo imaging, harmonic distortion and saturation, reverberation (used rarely), and limiting. These forms of processing are used as plugins in a digital setup, or as hardware in an analog setup.

Let’s look at these one by one.

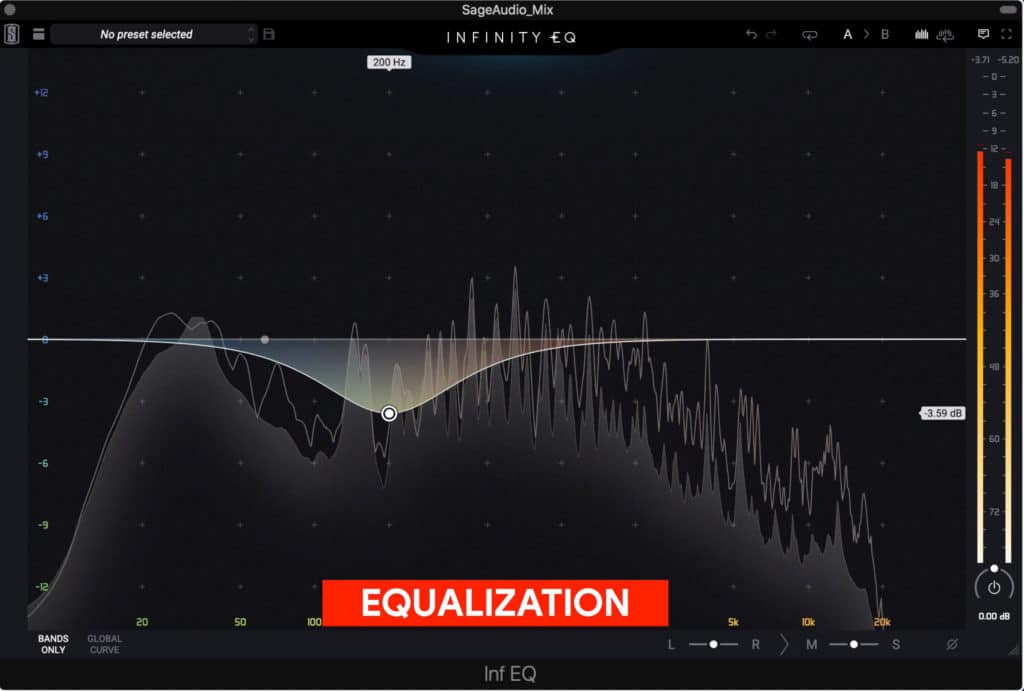

Equalization:

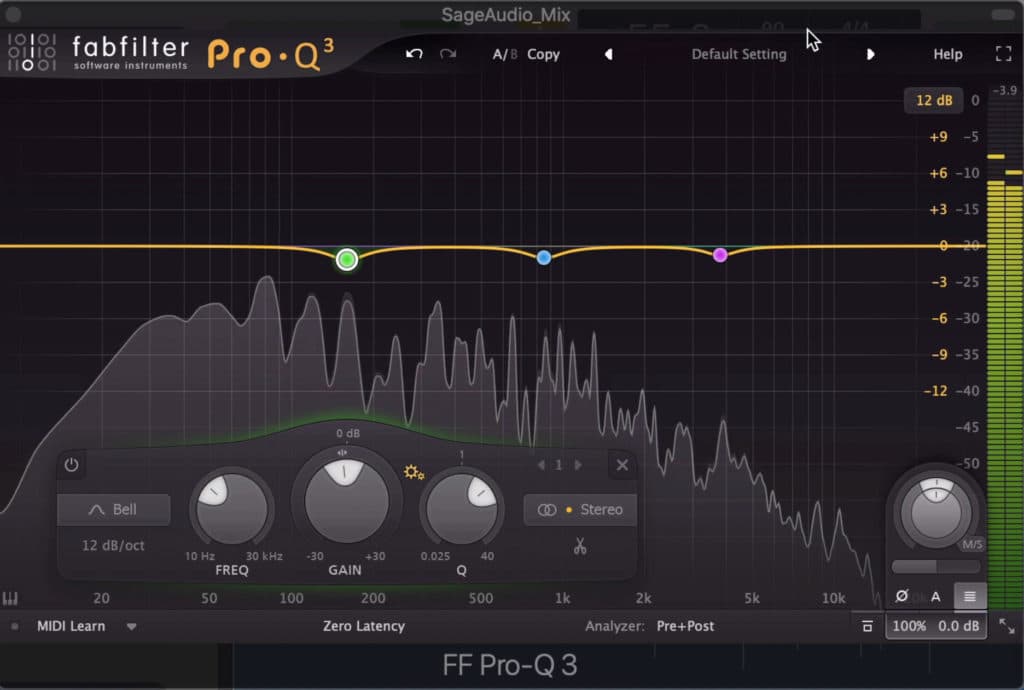

Equalization is used to balance and augment the frequency response.

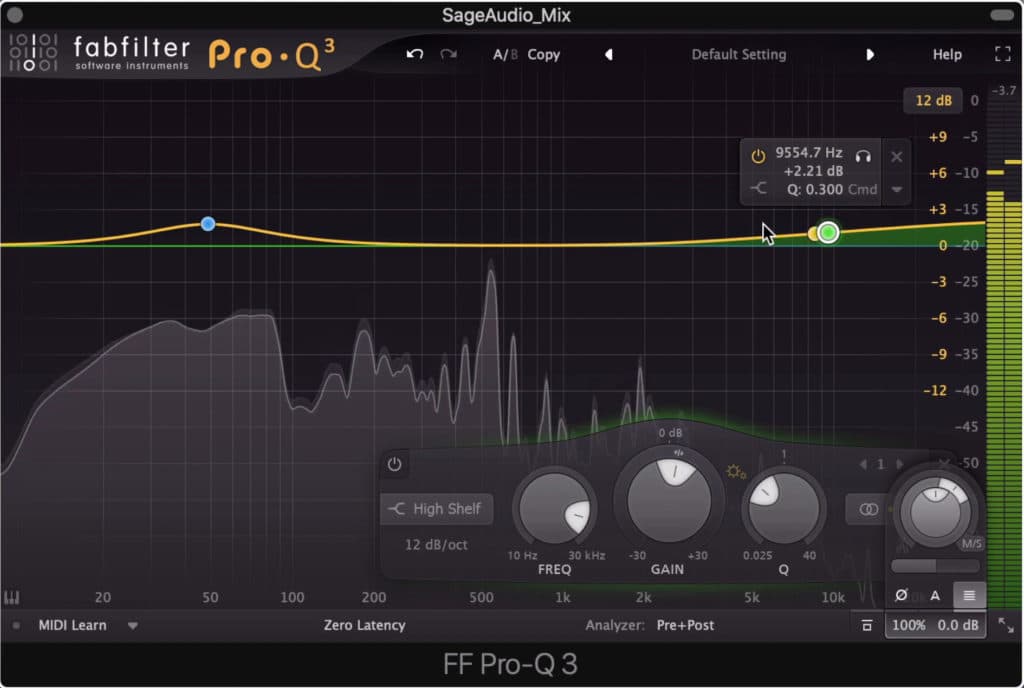

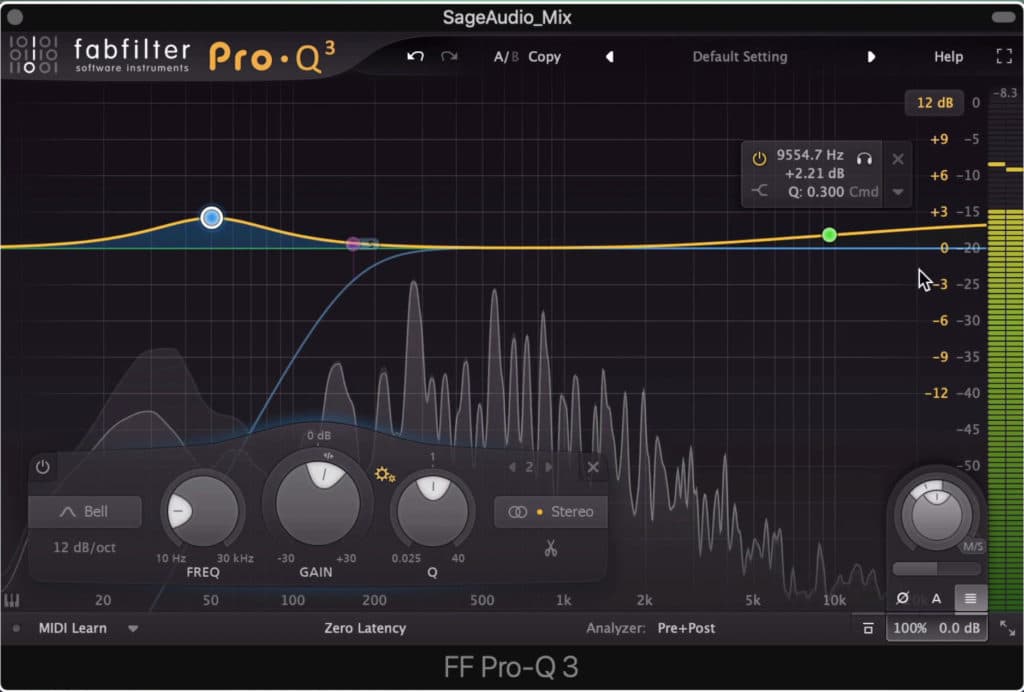

Equalization is used during mastering to attenuate and/or amplify any aspects of the frequency response. The goal of equalization is to create a balanced sound, one in which all frequencies of the signal are present and sound sonically pleasing.

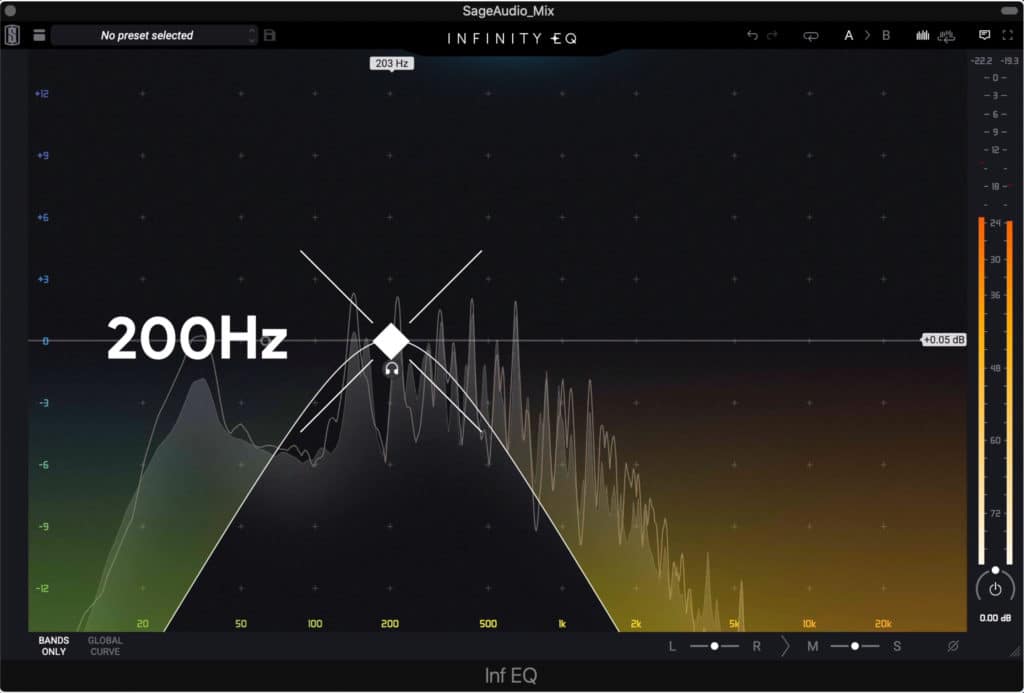

When using subtractive equalization, certain frequencies are attenuated. For example, if 200Hz is too prominent in a recording, this often sounds “muddy.” Just like in a mixing session, this frequency would be attenuated to lessen the “muddiness” of the recording.

In this example, 200Hz was too prominent and was attenuated during mastering.

When using additive equalization, certain frequencies are amplified. For example, if the kick isn’t cutting through enough, and primarily occupies 60Hz - 120Hz , then amplifying this section of the frequency response would make it more perceivable.

During both subtractive and additive equalization, the goal is to make an unbalanced frequency response sound more balanced.

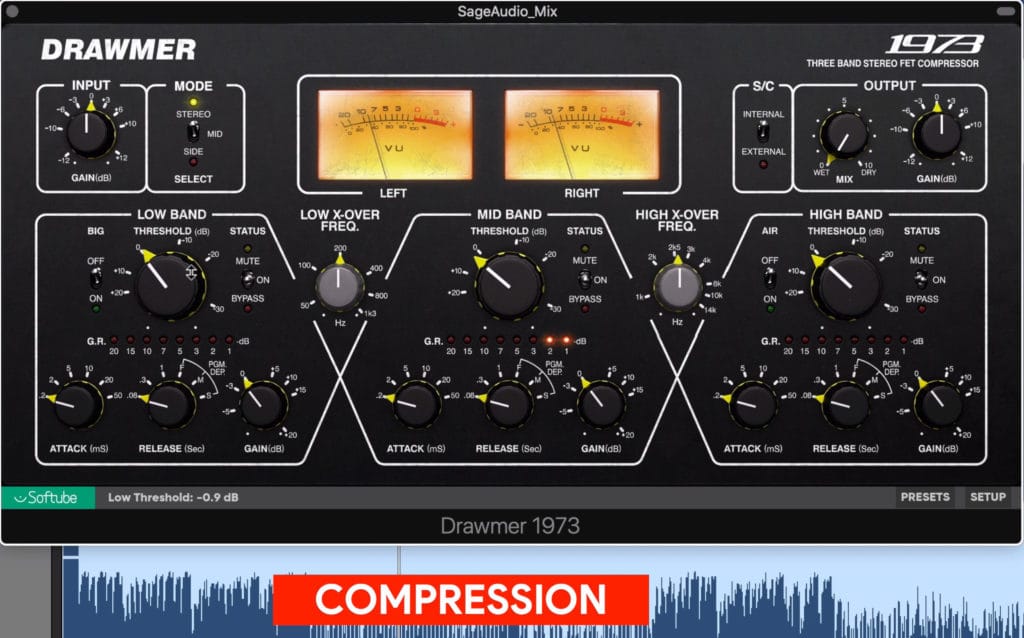

Compression:

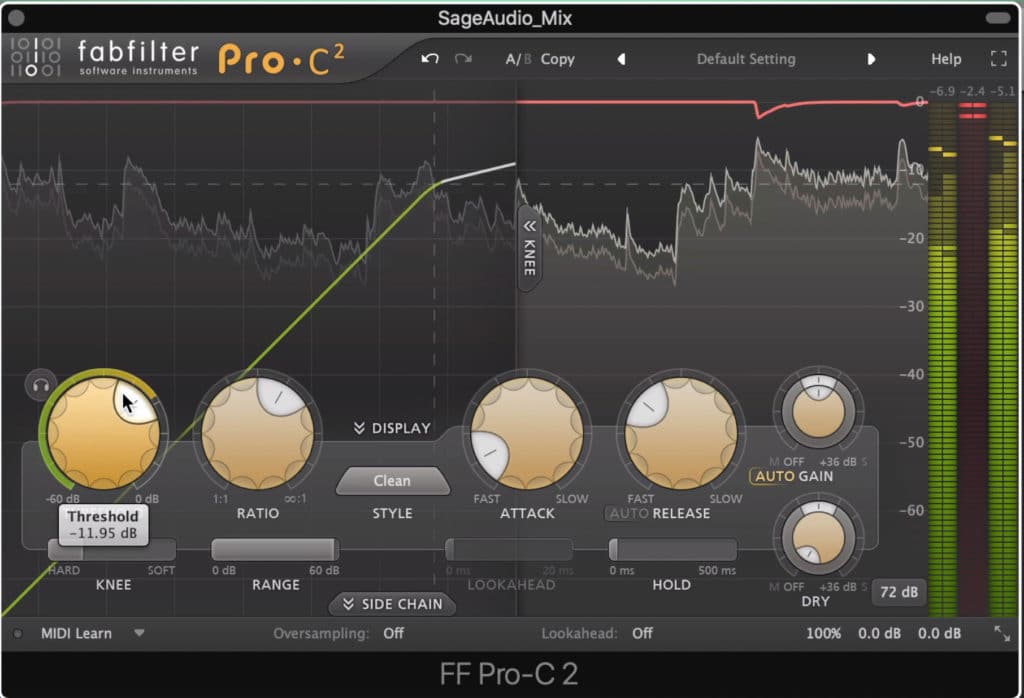

Compression is used to control dynamics and peaks.

Compression is used during music mastering to control dynamics, which in the context of mastering means any peaks that are close to clipping distortion.

Compression is used to control these dynamics so that the overall level of the master can be increased and made louder. Because there is only so much room in a digital system before clipping distortion occurs, dynamic peaks need to be controlled so the signal can be amplified without causing clipping distortion.

As you can imagine, the louder a master needs to be, the more compression is needed to control dynamics, and the less dynamic the master will be overall.

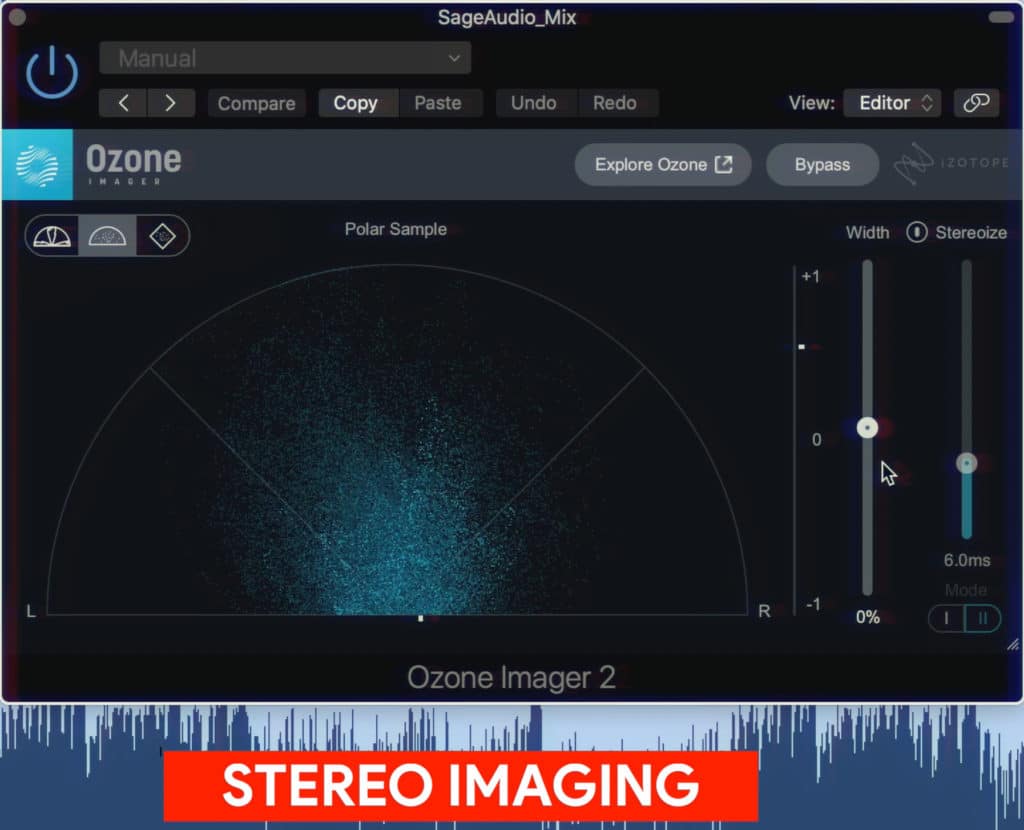

Stereo Imaging:

Stereo imaging is used to either widen or narrow the stereo width of a signal.

Stereo imaging typically is used during mastering as a way to make the master sound wider. Stereo imaging is also helpful when trying to create a more centered and focused sound.

Stereo imaging during most often occurs by using minute phasing differences between signals to create expansion of the signal into the stereo image. Panning is rarely if ever, used during mastering to affect the stereo image.

Instead, mid-side processing is a popular option, as it allows engineers to separate and then affect the middle or center image, and the side or stereo image separately.

The wider a master is made, the less focused and powerful it’ll sound. Having too wide of a master can create a “washed out” sound, in which no instruments have a defined place within the stereo field.

Harmonic Distortion and Saturation:

Harmonic distortion and saturation compress and distort the signal, making it sound fuller and more complex.

Although distortion is often avoided, certain forms of distortion are enjoyable for listeners. Subtle harmonic distortion is used to make a recording so full, as the harmonics fill in gaps in the frequency response, as well as make the fundamental frequencies of a recording more apparent.

Harmonic distortion and saturation go hand-in-hand as they’re closely related forms of processing.

Saturation is a combination of compression and harmonic distortion, and occurs when the electrical components of a piece of hardware are overloaded or “saturated.”

When an electrical component is saturated, it means that it can no longer accurately reproduce an electrical signal - this results in distortion and gradual compression.

Saturation is a combination of compression and distortion.

This phenomenon can be emulated in digital mastering with analog emulation plugins that simulate the sound saturation creates by recreating specific harmonics and introducing soft-knee compression.

Harmonic distortion and saturation should be used carefully during mastering,as too much distortion can be fatiguing to listen to for an extended period of time.

Reverberation:

Reverb is rarely used during mastering.

Although reverb is a popular effect during mixing, it rarely plays a role during mastering. That said, there may still be some applications for reverb during mastering.

The main reason a mastering engineer would use reverb would be to create a cohesive sound amongst the instrumentation of a mix. That said, reverb can quickly become noticeable in a negative way, as it can cause the power and impact of the master to become lessened and dispersed.

When reverb is used for mastering, the effect should be mainly dry.

If you ever create a master and choose to use reverb, be sure to use the reverb sparingly by having the wet/dry function in the almost completely dry position.

If you have a mix that you’ve been working on, and you’re ready to hear it professionally mastered, send it to us here:

We’ll master it for you and send you a free mastered sample for you to review.

Limiting

Limiting should not be used excessively.

Limiting is perhaps the most well-known form of processing in music mastering - limiting is also the most commonly overused effect in mastering.

When used correctly, limiting is used to protect a signal from clipping distortion and to add a realistic amount of loudness to a master.

When used incorrectly, limiting is used to make a signal excessively loud, and in the process introduces distortion and causes an incredibly compressed sound.

An excessively limited signal sounds too aggressive and will be fatiguing to listeners over a quick period of time.

Now that we understand some of the forms of processing that go into music mastering, let’s talk more about the music itself. In this next section, we’ll discuss how the genre of the music being mastered affects the mastering process.

If you want to learn how to master music, check out our blog post and video detailing it step by step:

We’ll show you some simple steps for mastering your track.

Mastering Based On Genre

Mastering for Pop Music

When mastering for pop music, an engineer will often compress and limit more to create an overall louder master. Additionally, the low and high-frequency ranges will be made more apparent through additive equalization.

Pop music is mastered moderately loud to loud.

The stereo image is often made wider in pop music mastering than in other genres. Saturation is used sparingly and as a way to make the master sound fuller - not as a stylistic or prominent aspect of the master.

The low and high frequency ranges of pop music are often amplified.

Lastly, compression times are set to be in time with the tempo of the track - this increases the musicality of compression.

Mastering for Rap or Hip-Hop Music

Rap music is made moderately loud to loud.

Rap or Hip-Hop music will be mastered louder than most other genres, which means more compression and limiting will be used. The low and high frequencies will be significantly louder than most other genres, sometimes to the extent that they will distort consumer-grade equipment.

The low and high frequency ranges are much louder than other genres.

The stereo image is typically very centered in the low-frequency range and more dispersed in the high-frequency range. Some rap, trap, and hip-hop songs are made almost completely mono to ensure playability on all consumer-grade equipment.

Expansion of the low and high frequencies can be helpful when mastering hip-hop, as some mixes come in too compressed. Some slight expansion below 150Hz and above 4kHz can be used to bring life back into an overly compressed hip-hop mix.

Mastering for Rock Music

Rock music is mastered moderately quiet to loud, depending on the sub-genre.

Rock music masters range in their loudness, with some being made very loud, and some made with an emphasis on the dynamic range. How loud you make a rock music master often depends on the artist’s preference.

Compression will be set to be in time with the tempo of the track. The harder the rock, the more transient retention will become important.

Distortion may not be needed if the instrumentation is already heavily distortion.

If a rock mix is already heavily distorted, it may not help to add more distortion - this again depends on the artist’s preference and the sub-genre of the rock music.

The stereo image of a rock master ranges from little stereo expansion to moderate stereo expansion. You’ll rarely find rock songs that are made as wide as pop tracks.

Mastering for Metal Music

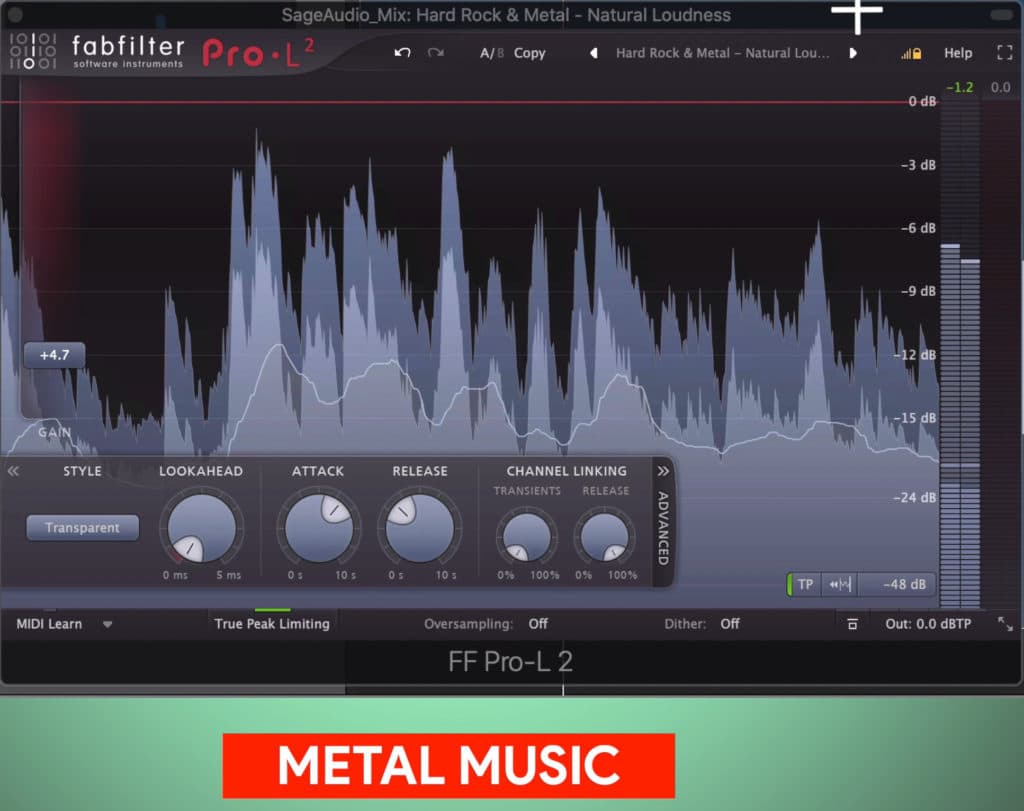

Metal masters are typically made loud; however, they have become increasingly more reserved over recent years with an added emphasis on dynamic and transient retention. Although metal masters had become notorious for heavy amounts of compression and limiting, today, they seem to be made moderately loud.

Metal music is today mastered moderately loud.

The distortion present in a Metal mix comes from the instrumentation itself and cannot be decreased during mastering. That said, more distortion is rarely needed, and can become excessive quickly.

Like with Rock Music, Metal Music may not need extra distortion.

The stereo image of metal is similar to rock music and will depend on the sub-genre of metal music.

Compression should be minimized to avoid transient reduction.

The frequency spectrum of a Metal master is balanced without any extra amplitude being associated with a particular section of the frequency spectrum.

Mastering for Jazz Music

Jazz music is mastered much quieter than other genres. When mastering Jazz music, the goal is to make the master sound as close to a live performance, or maybe an augmented live performance, as possible.

Jazz music is mastered quietly.

With that said, equalization is used solely in a corrective way. If the high or low-frequency ranges of a Jazz master are accentuated like they are in pop or rap, listeners will notice and react negatively to the unwanted changes.

The same can be said about compression, saturation, and limiting. Each can be introduced sparingly and carefully.

If compression, saturation, or limiting are introduced in the same manner they are in other genres, this processing will detract from the overall quality of the master.

The EQ should be corrective.

Now that we’ve briefly covered how certain genres are mastered, let’s discuss the last aspect of music master - the medium on which the master is distributed.

If you want to learn more about mastering based on genre, check out our YouTube playlist on the topic:

In it, you’ll find 15 videos, each covering a specific music genre.

Mastering for Specific Mediums

How Music is Mastered for Digital Streaming Services

When music is being mastered for a digital streaming service, only a couple of technical limitations need to be taken into consideration. These technical aspects are the integrated LUFS or loudness of the master, and the loudest peak of the signal measured in dBTP or dB True Peak.

The integrated loudness needs to be taken into consideration due to a process known as loudness normalization. Loudness normalization occurs prior to the audio being streamed and is performed by an algorithm associated with the actual streaming service (it does not occur during mastering).

Spotify normalizes the loudness of it's content to -14 LUFS.

For example, the loudness normalization of Spotify is -14 LUFS, meaning if a master is quieter than this measurement it will be turned up, and if it is louder than this measurement, it will be turned down.

With that in mind, a mastering engineer shouldn’t master music incredibly loud and sacrifice dynamics for this loudness if the master will be turned down from loudness normalization.

The loudest peak(s) needs to be taken into consideration due to small changes in amplitude that occur during the online encoding process. These small changes can cause the master to peak, resulting in clipping distortion during playback.

With that said, it helps to keep the loudest peak below -1dBTP.

How Music is Mastered for Vinyl Records

Vinyl records have many technical limitations that need to be taken into consideration during the mastering process. The primary aspects to consider are the amount of distortion that can be tolerated by the lathe, how dynamic a record can be during playback without the needle skipping, and others.

The physical nature of a vinyl record imposes technical limitations.

A master for a vinyl record needs to have controlled dynamics to avoid skipping , lower harmonic distortion to avoid additional distortion during transfer, and a more controlled high-frequency range to avoid overwhelming the lathe.

The sequence of the tracks also needs to be planned ahead of time, as the center of the record causes the high frequencies to become attenuated.

How Music is Mastered for Cassette

How music is mastered for Cassette depends on the cassette tape type that the master will be recorded onto. Masters made for cassette will most likely be recorded onto tape type 1, which means the master will need to be made louder to cover tape hiss and will need an amplified high-frequency range.

Cassettes cannot handle excessive distortion and will need to be written onto loudly to cover up tape hiss.

If you want to learn more about mastering for Vinyl or Cassette, here are some great blog posts and videos on the topic:

Conclusion

Music mastering is dependent on a lot of factors - mainly the genre, and how it’s distributed. Various genres will be mastered differently than one another, and various mediums will need to be for different.

Mastering music includes equalization, compression, harmonic distortion and saturation, stereo imaging (often using mid-side processing), and limiting.

How these forms of processing are introduced depend heavily on the genre, and the medium.

With that said, music mastering is a complex field with many factors that need to be taken into consideration. In this overview of music mastering, some of the finer details haven’t been discussed.

It’s best to look more into the topics touched on above to get a more comprehensive understanding.

If you have a mix you want to be mastered, send it to us here;

We’ll master it for you and send you a free mastered sample for you to review.

Have you mastered music before?