What is a Mixing Engineer?

Quick Answer

A Mixing Engineer is responsible for adding creative as well as preventative processing to an audio recording. Typically speaking, a mixing engineer processes multiple tracks, or individual instrument groups, in a unique manner for the sake of improving the sonic quality and overall enjoyability of a musical recording.

What is a Mixing Engineer in Detail

A mixing engineer is responsible for many things but often gets to experience the most creative aspect of the music production process.

Mixing is both a technical and creative aspects of audio production.

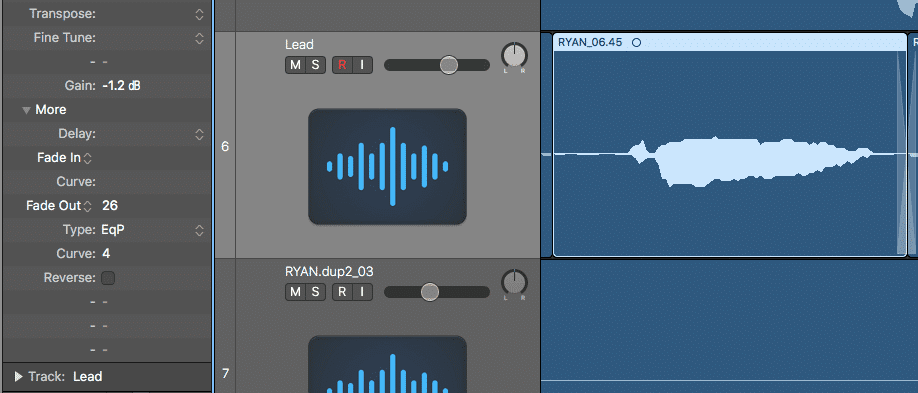

Typically speaking, a mixing engineer receives the edited instrument groups - this means that the best takes have already been chosen, the amplitudes of the performances have been relatively balanced with clip gain, and all of the correct cuts and fades have been used.

In this regard, a mixing engineer has a clean and organized session with which to work, as they often and should receive the consolidated instrument tracks, without multiple takes or any need for further editing.

Granted, this isn’t always the case, as a mixing session may also include small elements of editing; however, in a professional mixing session, this editing has already taken place.

When mixing begins, ideally, all editing has already been completed.

This leaves the mixing engineer free to focus entirely on the creative and technical process of balancing the multiple instrument groups in an entertaining, and sonically pleasing way. This freedom often makes mixing, the most creative and unrestrained aspect of the music production process.

If you’re considering becoming a mixing engineer, or are simply curious what a mixing engineer does, follow along as we cover various roles and responsibilities of a mixing engineer.

Mixing can be incredibly rewarding, as it often has the least amount of technical restraints.

We’ll be looking into the ways a mixing engineer affects and processes the signal, what comes after mixing and how that affects the mixing process, how mixing can be used for creative effect, and some of the techniques you can use to make mixing a bit easier or make your mixes sound better.

If you have a mix that is already finished or you intend to finish soon, and you’re looking to have it mastered, you can send it to us here:

We’ll master your mix for you, and send you a free mastered sample of it for you to review.

What Processing or Effects are Used During Mixing?

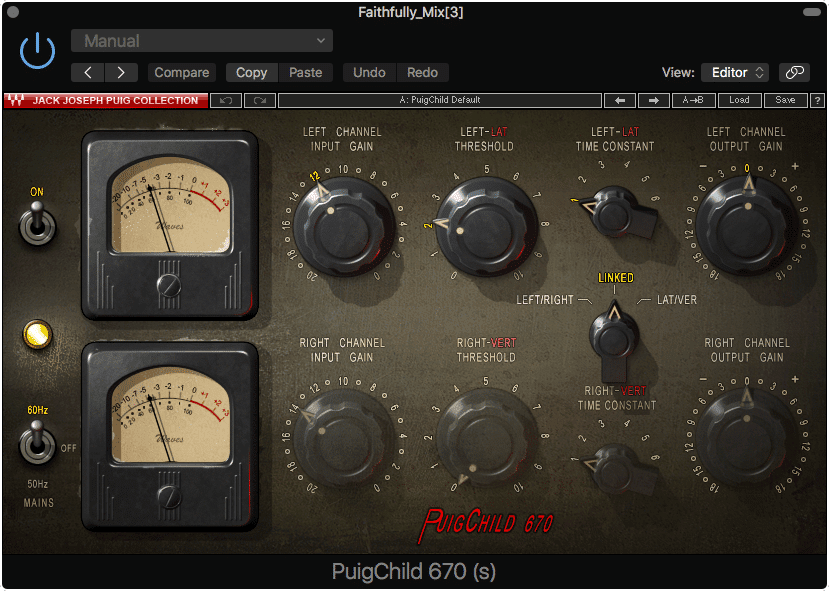

During mixing, dynamic, spectral, and temporal-based effects are used to affect the signal in various ways. These forms of processing include, but are not limited to compression, saturation (a mixture of compression and distortion), equalization, distortion and harmonic generation, stereo imaging, delay, reverb, and various forms of analog emulation.

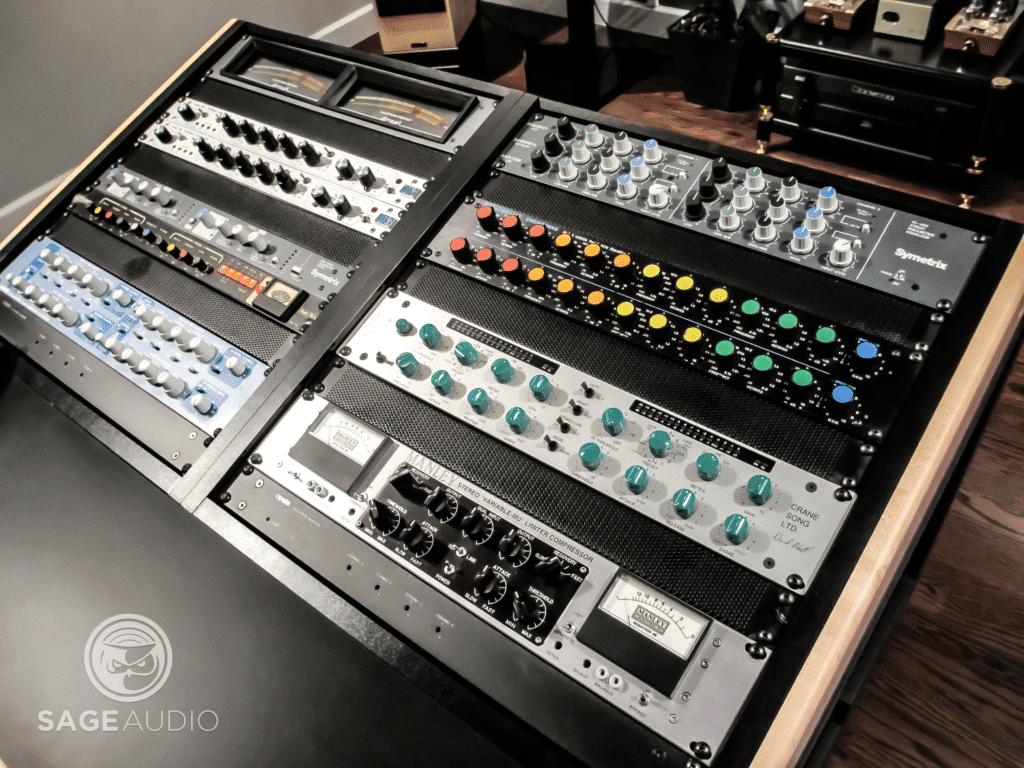

Compression, analog emulation, and other various forms of processing are used during the mixing process.

Each one of these types of processing can be implemented in a myriad of ways and can affect the signal in numerous, if not countless ways.

With that said, instead of trying to cover these forms of processing as meticulously as possible, let’s look at a few select examples different types of processing are used during mixing.

But Just to Reiterate, a Mixing Engineer Adds These Effects During Mixing:

- Compression

- Saturation

- Equalization

- Distortion and Harmonic Generation

- Stereo Imaging

- Delay

- Reverb

- Analog Emulation

Hopefully looking at some examples of how these effects are used will help to offer a better understanding of what a mixing engineer is, and what they do when mixing.

Let’s say a mixing engineer wanted to make a lead vocal sound thicker and to stand out from the rest of the instrumentation. This common mixing practice would take multiple forms of processing to accomplish.

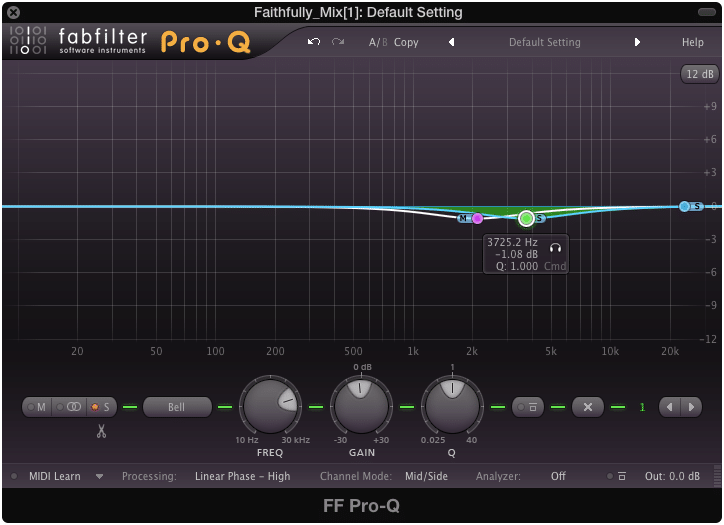

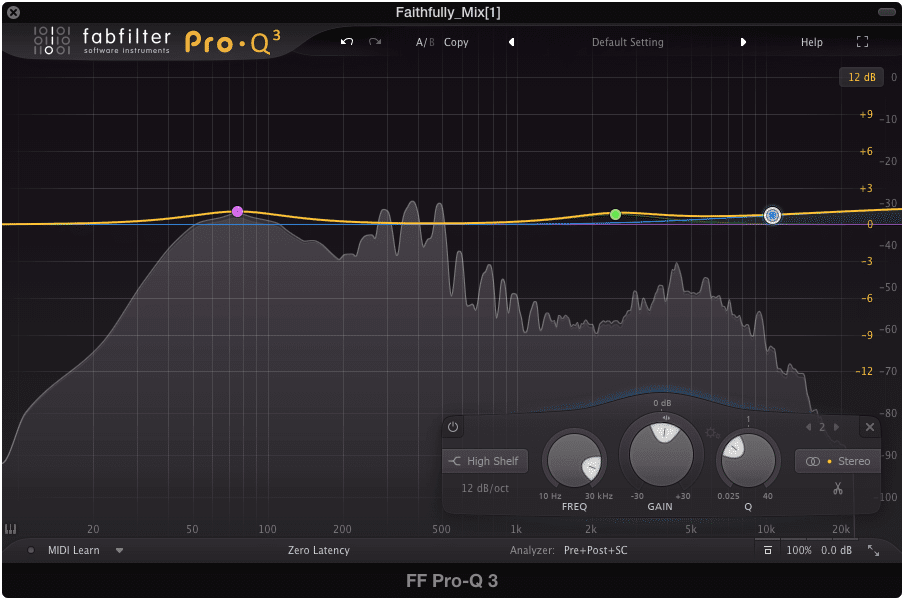

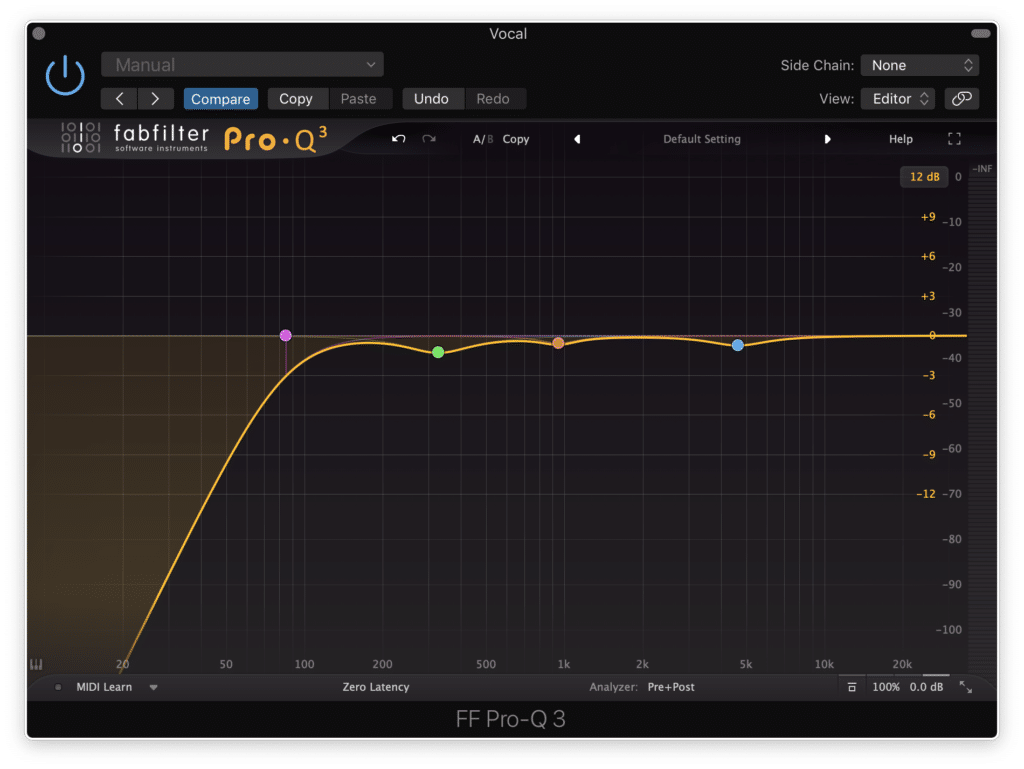

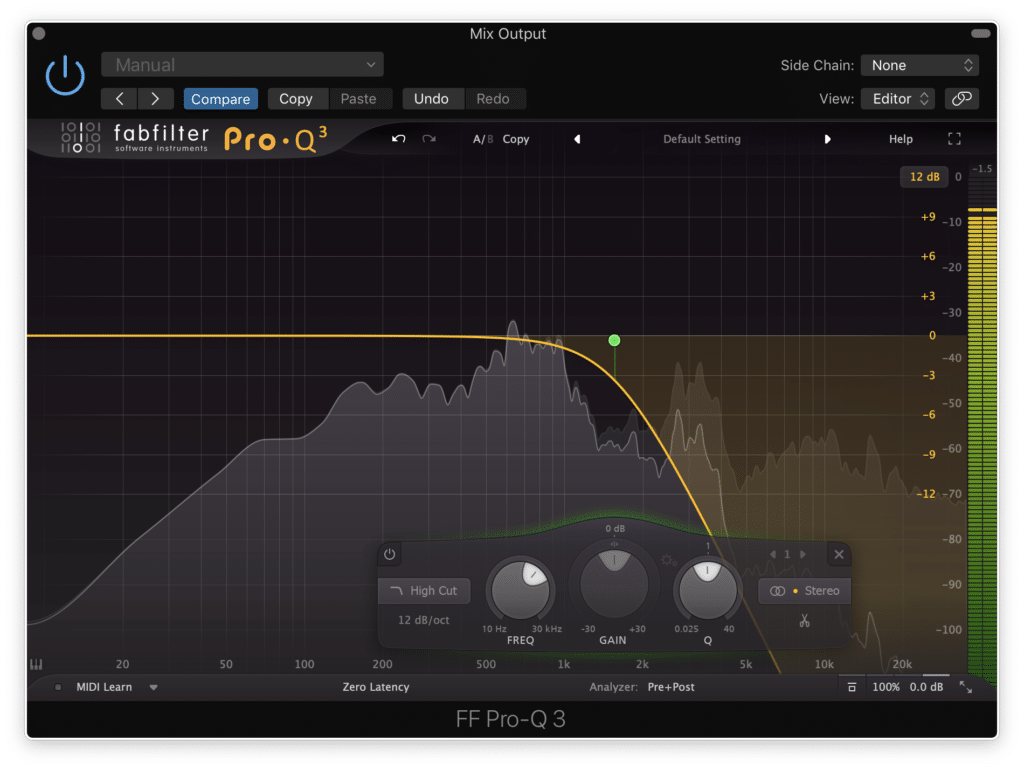

The first would more often be subtractive equalization - in other words, equalization in which undesired frequencies are attenuated. By attenuating frequencies that make the vocal sound undefined, or perhaps clash with other frequencies made present by the instrumentation, the engineer could create a more easily perceived lead vocal.

Subtractive equalization attenuates undesired frequencies before further processing

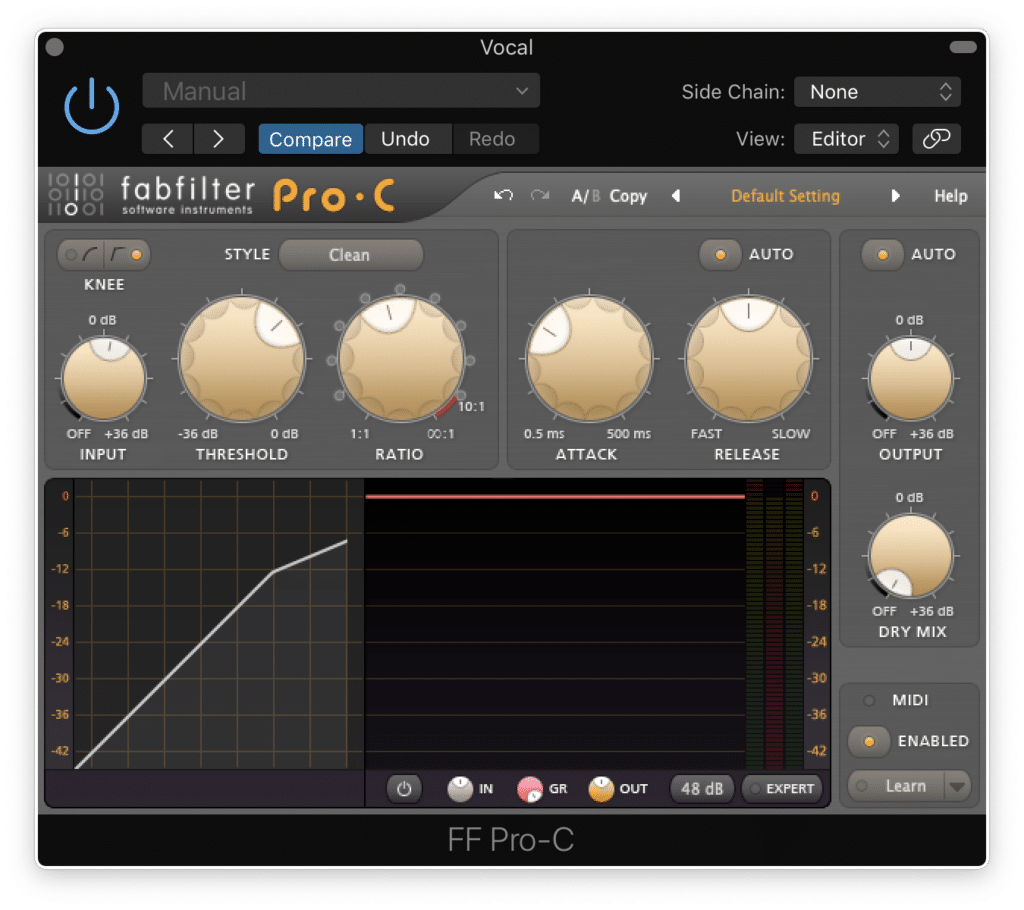

Next, the mixing engineer could use compression to attenuate the most dynamic aspects of the vocal performance, but just as importantly, to make it possible to amplify the quieter aspects of the recording. Doing so would mean that the more nuanced aspects of the performance would be made perceivable to the listener, and in turn, create a more complex vocal recording.

Low-level compression amplifies harder to hear aspects of the recording.

After this, the mixing engineer may implement some harmonic distortion in the form of analog emulation. By creating harmonics that relate to the vocal’s fundamental, the mixing engineer could make the vocal more easily perceivable, as well as increase its amplitude by generating a signal in frequencies that were previous unoccupied by the vocal.

Analog emulators are used to generate harmonics often associated with classic hardware.

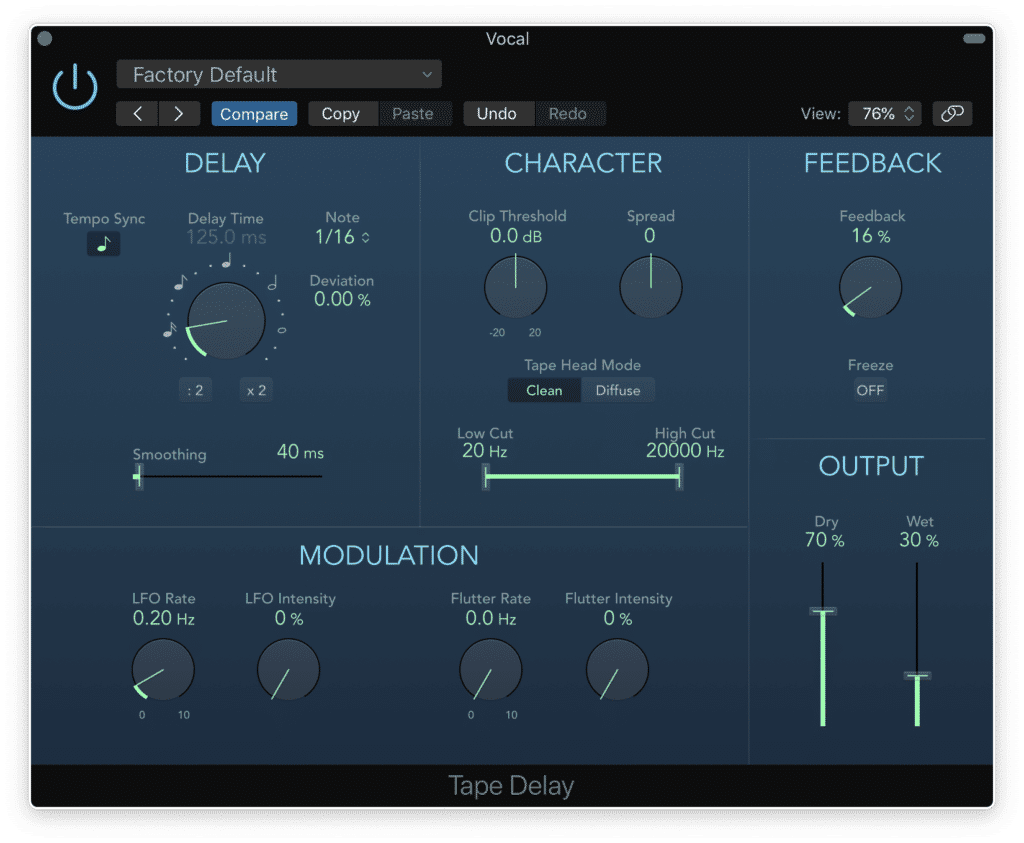

Once the vocal has been equalized with subtractive equalization, compressed to make the quieter aspects louder, and treated with analog emulation for the sake of harmonic generation, the vocal would most likely be run through temporal processors.

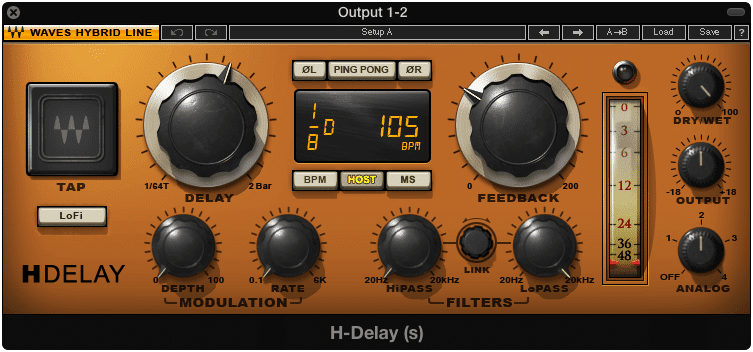

Delay can be used to thicken a lead vocal, and is an example of a temporal processor.

Temporal processing includes reverb, delays, and any time-based effects. In this instance, a short delay of less than 130ms would be used to make the vocal louder, as well as make it more perceivable, by increasing the amount of time it is present, while keeping it perceived as one signal.

Lastly , further equalization could be used to amplify and accentuate the 2kHz area, which would make the vocal stand out from the other instrumentation.

Additive equalization means adding more of the frequencies you want to hear.

If you’d like to see how this is accomplished, watch our video on the topic which demonstrates how to create a thicker vocal recording with these forms of processing:

It shows the aforementioned forms of processing, as well as some that are not mentioned in the above example.

This is just one example of how multiple forms of processing come together during the mixing process to achieve the desired effect.

If you’re trying to follow along with the example presented above, here is a step-by-step explanation:

Step 1: Use subtractive equalization to attenuate any frequencies that make the lead vocal sound unintelligible, or clash with the other instrumentation.

Find what frequencies make the vocal blend in too much, and slightly attenuate those frequencies.

Step 2: Use compression to both control dynamics and amplify quieter aspects of the recording.A low-level compressor would be a great option for this step

When compressing, using makeup gain after the fact will increase thew quieter aspects of the recording.

Step 3: Introduce harmonic distortion.This can be accomplished with distortion plugins or analog emulation plugins.Be sure not to do so excessively, unless a distorted sound is desired.

Analog emulation can be used to add harmonics. This is typically accomplished with the drive function; however, some analog emulators add harmonics without the need to change any settings.

Step 4: Use a delay shorter than 130ms to create a perceivable thicker vocal, without the perception of two separate signals.A delay time of greater than 130ms will result in two perceivable signals.

The delay used here would be great from thickening a vocal, without making the vocal sound like two separate sound sources.

What Comes After Mixing and How Does it Affect a Mixing Engineer?

Once the mixing process is complete, either the mixing engineer or the client will submit the mix or mixes for audio mastering. Considering audio mastering results in affecting the stereo file of a mix, a mixing engineer should not do any processing that affects the entirety of the mix.

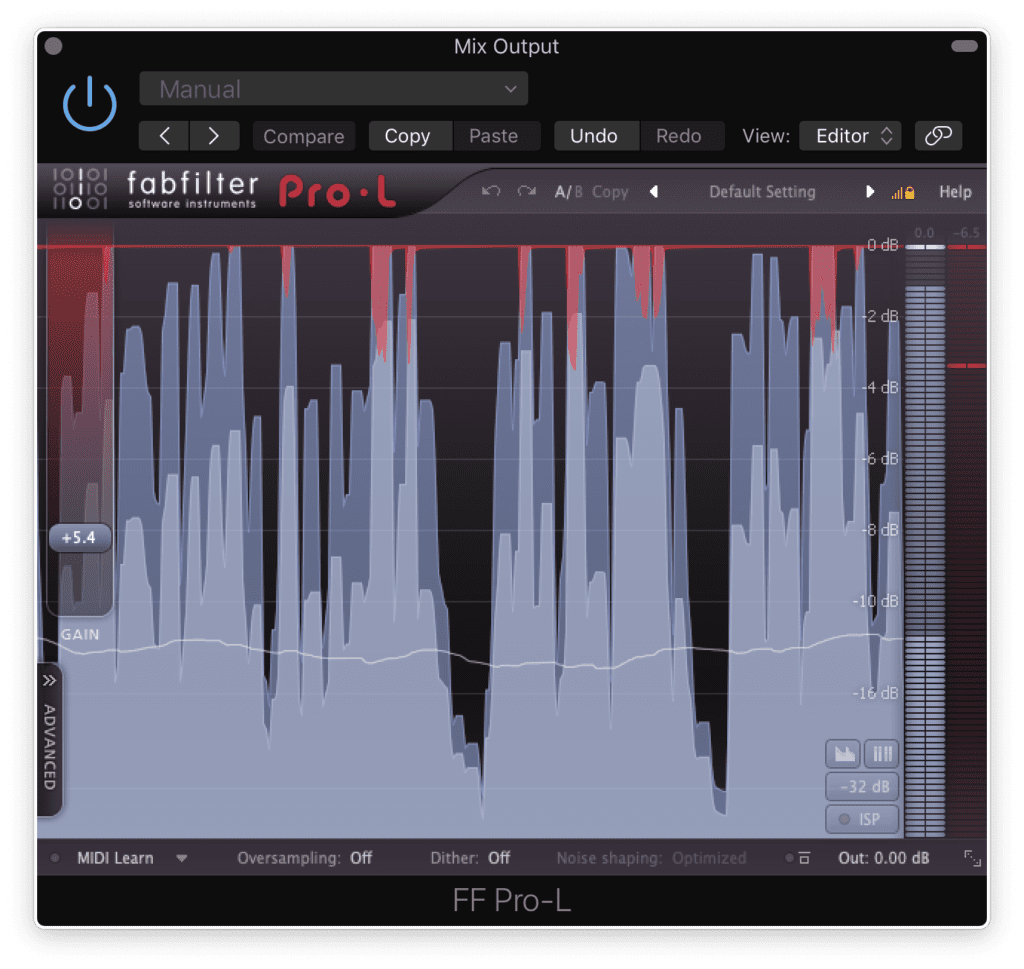

Mastering should affect the decision making of a mixing engineer.

The processing that a mixing engineer should avoid, is any processing that affects the entirety of the mix at once.

A mixing engineer should avoid:

- Limiting the Output

- Compressing the Output

- Equalizing the Output

- Distorting the Output

Or any other form of processing in which the entire mix is processed collectively.

Some times, mixing engineers add limiters to the master output; however, this should be avoided.

The reason for this is that much of this processing will be and should be handled by a mastering engineer. Regardless of this, many mixing engineers make the mistake of adding this type of processing to their entire mix.

If you’re a mixing engineer, this is alright to do if your sole intention is to offer a client a preview of what you’ve been mixing; however, it should not be done to any mix which will be sent to mastering.

Making a mix sound like a faux master can be done for a listener to preview, but not for a mix being sent off to mastering.

Any mixing engineer that does this is simply making the job of a mastering engineer more difficult, and possibly exacerbating any flaws or issues with their mix that could have otherwise been properly addressed during a proper mastering session.

Mastering is used to help remedy some of the mistakes made during the mixing process. This means. mastering engineers need sonic room to work on a mix.

A mixing engineer should not take on the role of mastering and should fight the inclination to process a mix to sound closer to the “finished” product.

With that said, a mixing engineer understands that there is more processing that will occur after their job is finished. Part of mixing a mix correctly is ensuring that this further processing can occur, and can be used to remedy or accentuate any aspects of the mix that need addressing.

A big part of being a mixing engineer is allowing mastering engineers to do their job properly.

Furthermore, a mixing engineer should not export the mix at a loud level that closely mimics the final loudness the track will be mastered too. Leaving enough headroom for a mastering engineer to work is crucial to providing a good mix.

If you’d like to learn more about how to mix for mastering, you can check out one of our blog posts on that topic:

It shows some of the things you shouldn’t do when exporting or delivering a mix.

If your mix is ready for mastering, send it to us here:

We’ll master it for you and send you a sample. This way you can hear how your track would sound mastered, and in turn, truly know if your mix is finished.

How Can Mixing Be Used for Creative Effect

Mixing techniques can be used to closely mimic the emotional content or the context of a song, by introducing forms of processing that evoke or augment that emotional content. One example of mixing for a creative effect is using reverb to create a larger than life, or ethereal feeling.

Of course, this example is a pretty broad one but does demonstrate how processing can evoke a feeling nonetheless.

One particularly interesting example I came across recently, was in Billie Eilish’s new song (or at least new at the time of writing this) “Everything I Wanted.”

The album artwork for "Everything I Wanted."

In the song, Eilish sings about being in a confusing dreamscape, in which where she truly is, is a mystery. Fittingly, the instrumentation is muted tonally speaking, with the high end being almost none existent, creating a lack of definition - similar to how a blur would be used in film or photography.

Audio effects can be used in a similar conceptual way to video and photography effects.

Similarly, distortion is used to further evoke a lack of clarity amongst the instrumental and Eilish’s voice.

All of this is somewhat subtle until Eilish sings the line “my head was underwater,” at which point, an equalizer has been automated to attenuate the high-frequency spectrum. From the listener’s perspective, it sounds as if Eilish’s voice is coming from underneath water.

To accomplish this effect, all you'd need to do is create a low-pass filter.

This clever trick, although perhaps a common and known technique amongst mixing engineers, creates a greater emersion for the listener. Keep in mind that most listeners aren’t thinking about these effects in the same manner an audio engineer would, and to them, something like the underwater effect just mentioned, can be truly special.

Moments like this in a mix can help listeners better relate to the song.

In a similar fashion, when Eilish sings the word “fly,” a delay and airy reverb is used to emulate that sensation. The delayed versions of the utterance are made more open using equalization, primarily a boost in the 10kHz area, giving the listener a tonal cue that relates to the imagery presented by the song’s lyricism.

These are the types of decisions a mixing engineer can make that takes the vocation of mixing from a purely technical one, to a creative and artistic endeavor. Ultimately, these types of techniques bring depth and complexity to a mix, as they serve to augment the listening experience both sonically and emotionally.

When a mixing engineer can introduce these types of creative elements into a mix, mixing becomes an art form in and of itself.

If you’ve been working on a mix, and trying to introduce these more creative types of processing, hear how it would sound mastered:

We’ll master the track for you, and send you a free mastered sample of that mix.

What Techniques Do Mixing Engineers Use?

There are multiple techniques that mixing engineers can use to achieve desired effects; however, here are some of the newest and most popular techniques that can help create an enjoyable and well-balanced mix:

- Inverse Equalization Matching

- Low-Level Compression following Harmonic Generation

- Psychoacoustic Spacial Design

Granted these are just a few of the multiple techniques a mixing engineer can use to create a balanced, interesting, and especially complex mix. Let’s briefly discuss what these techniques are.

What is Inverse Equalization Matching?

Inverse Matching Equalization is using equalization matching software to match the frequency spectrums of two similar sound sources, such as a lead guitar and rhythm guitar. Once matched, the equalization of one is inverted to accentuate the frequencies that make the two sound sources separate from one another.

If you’d like to see how this is accomplished, check out our video that shows this process step by step:

What is Low-level Compression?

Low-level compression is the process of capturing, compressing, and amplifying the quieter aspects of a recording, instead of simply attenuating the louder dynamics. If harmonic generation is followed with low-level compression, it will make this harmonic generation even more perceivable to the listener.

If you’d like to see this process, here is a video and blog post that shows it in use:

What is Psychoacoustic Spatial Design?

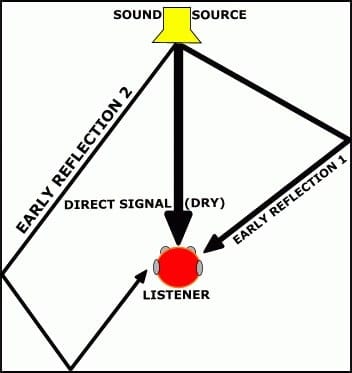

Psychoacoustic spatial design is the process of using particular psychoacoustic techniques to affect a listener’s perception of the width and depth of a stereo sound source. These psychoacoustic effects typically are created using temporal processing, however, equalization can also be used to accomplish these effects.

Want to learn how to use these effects in your mix? Check out our video and blog post on this topic:

Conclusion

Understanding what a mixing engineer is would entail understanding all the forms of processing that can be added during a mixing session; however, despite the complexity of the position in question, hopefully, the examples provided above offer some insight.

A mixing engineer can be both a technical and creative profession, but as it relates to all of music production, mixing is arguably the most creative part of the process.

Being a mixing engineer means understanding multiple forms of processing and how they can come together to create the desired effect. It also means knowing how to implement certain effects to augment the emotional elements of the music being mixed.

Furthermore, it means knowing what to, and what not to do when preparing a mix for a mastering session. Again, the mixing engineer is not responsible for processing the entirety of the mix with plugins or hardware on the output. Doing so will make the role of the mastering engineer more difficult and less productive.

Lastly, if you intend to become a mixing engineer, or are simply curious about the profession, it wouldn’t hurt to understand some of the techniques mixing engineers use to create a balanced, and enjoyable mix.

Some of these techniques include using inverse equalization matching, low-level compression following harmonic generation, and psychoacoustic-based spacial design. Although these are certainly not all of the techniques used in mixing, they do offer some insight into the mixing process, and in turn, what a mixing engineer is.

With that said, if you’re a mixing engineer or artist, and you have a mix you need master, send it to us here:

Are You a Mixing Engineer?